Deep reinforcement learning for real-time energy dispatch in smart grids with high renewable penetration

DOI:

https://doi.org/10.18686/cest633Keywords:

deep reinforcement learning; energy dispatch; smart grids; renewable integration; Markov decision process; actor–critic algorithms; storage optimization; real-time controlAbstract

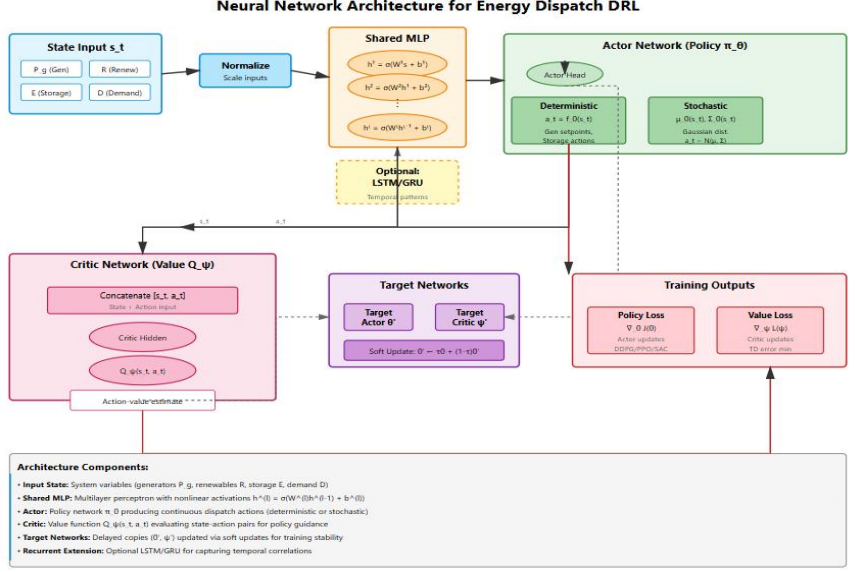

The increasing penetration of Renewable Energy (RE) in modern Smart Grids (SG) introduces substantial variability and uncertainty, posing critical challenges to real-time energy dispatch. Traditional optimization and rule-based methods, while effective under deterministic conditions, exhibit limited adaptability to stochastic RE generation and fluctuating demand. This study develops a Deep Reinforcement Learning (DRL) model for real-time dispatch in renewable-dominated SG, formulating the problem as a constrained Markov Decision Process (MDP). Actor-critic networks—Deep Deterministic Policy Gradient (DDPG), Proximal Policy Optimization (PPO), and Soft Actor-Critic (SAC)—learn adaptive policies that jointly minimize operational costs, enhance renewable integration, and maintain grid reliability. A modified IEEE 33-bus distribution system with high RE diffusion is simulated using historical solar and wind profiles, storage dynamics, and realistic demand patterns. A comparative analysis of rule-based heuristics, deterministic Mixed-Integer Linear Programming (MILP), and two-stage stochastic optimization proves that DRL achieves superior performance across multiple dimensions. SAC delivers the best results, reducing operational costs by 20%, achieving 92.8% renewable application, and minimizing loss-of-load probability to 0.5%, while maintaining real-time computational feasibility (0.41 s per dispatch interval). Constraint satisfaction validation confirms 99.8% voltage compliance and 100% thermal limit adherence. Scalability analysis of the IEEE 123-bus network reveals sub-quadratic training-time scaling and effective model transferability under parameter variations. Sensitivity analyses confirm robustness under varying prediction errors, dispatch granularities, and storage configurations. These results establish DRL as a scalable, reliable, and cost-efficient model for next-generation SG dispatch under RE uncertainty.

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Hayder M. Ali, Catherine Solomon, Mercy Beulah Edward, Kolluru Suresh Babu, Aseel Smerat, Tanweer Alam, Sardor Sabirov, Sudhakar Sengan

This work is licensed under a Creative Commons Attribution 4.0 International License.

References

1. Liu D, Zang C, Zeng P, et al. Deep reinforcement learning for real-time economic energy management of microgrid system considering uncertainties. Frontiers in Energy Research. 2023; 11: 1163053. doi: 10.3389/fenrg.2023.1163053 DOI: https://doi.org/10.3389/fenrg.2023.1163053

2. Lei L, Tan Y, Dahlenburg G, et al. Dynamic Energy Dispatch Based on Deep Reinforcement Learning in IoT-Driven Smart Isolated Microgrids. IEEE Internet of Things Journal. 2021; 8(10): 7938–7953. doi: 10.1109/jiot.2020.3042007 DOI: https://doi.org/10.1109/JIOT.2020.3042007

3. Li Y, Yu C, Shahidehpour M, et al. Deep Reinforcement Learning for Smart Grid Operations: Algorithms, Applications, and Prospects. Proceedings of the IEEE. 2023; 111(9): 1055–1096. doi: 10.1109/jproc.2023.3303358 DOI: https://doi.org/10.1109/JPROC.2023.3303358

4. Chang H, Gan Z, Wu Q, et al. Thermo-mechanical response of energy piles under monotonic cooling cycles and various mechanical loading levels. Journal of Energy Storage. 2025; 113: 115672. doi: 10.1016/j.est.2025.115672 DOI: https://doi.org/10.1016/j.est.2025.115672

5. Cardo-Miota J, Beltran H, Pérez E, et al. Deep reinforcement learning-based strategy for maximizing returns from renewable energy and energy storage systems in multi-electricity markets. Applied Energy. 2025; 388: 125561. doi: 10.1016/j.apenergy.2025.125561 DOI: https://doi.org/10.1016/j.apenergy.2025.125561

6. Guo K, Eckhert N, Chhajer K, et al. AutoGrid AI: Deep reinforcement learning framework for autonomous microgrid management. IEEE 13th international conference on smart energy grid engineering (SEGE). IEEE. 2025; 156–160. doi: 10.1109/sege65970.2025.11203539 DOI: https://doi.org/10.1109/SEGE65970.2025.11203539

7. Shojaeighadikolaei A, Ghasemi A, Jones K, et al. Distributed energy management and demand response in smart grids: A multi-agent deep reinforcement learning framework. Arxiv Preprint Arxiv. 2022; 2211: 15858. doi: 10.48550/arXiv.2211.15858

8. Bui V-H, Das S, Hussain A, et al. A critical review of safe reinforcement learning techniques in smart grid applications. Arxiv Preprint Arxiv. 2024; 2409: 16256. doi: 10.48550/arXiv.2409.1625

9. Mian, H.H., et al., dvances in computational intelligence for floating offshore wind turbines aerodynamics: Current state review and future potential, Renewable and Sustainable Energy Reviews, 224, 2025, 116098, DOI: 10.1016/j.rser.2025.116098. DOI: https://doi.org/10.1016/j.rser.2025.116098

10. Tang, X.; Wang, J. Deep Reinforcement Learning-Based Multi-Objective Optimization for Virtual Power Plants and Smart Grids: Maximizing Renewable Energy Integration and Grid Efficiency. Processes 2025, 13, 1809. https://doi.org/10.3390/pr13061809 DOI: https://doi.org/10.3390/pr13061809

11. Wei L, Yi C, Yun J. Energy drive and management of smart grids with high penetration of renewable sources of wind unit and solar panel. International Journal of Electrical Power & Energy Systems. 2021; 129: 106846. doi: 10.1016/j.ijepes.2021.106846. DOI: https://doi.org/10.1016/j.ijepes.2021.106846

12. Nakabi TA, Toivanen P. Deep reinforcement learning for energy management in a microgrid with flexible demand. Sustainable energy, grids and networks. 2021; 25: 100413. doi.org/10.1016/j.segan.2020.100413. DOI: https://doi.org/10.1016/j.segan.2020.100413

13. Joshi A, Tipaldi M, Glielmo L. Multi-agent reinforcement learning for decentralized control of shared battery energy storage system in residential community. Sustainable Energy, Grids and Networks. 2025; 41: 101627. doi: 10.1016/j.segan.2025.101627. DOI: https://doi.org/10.1016/j.segan.2025.101627

14. Toquica D, Agbossou K, Henao N. Multi-agent reinforcement learning for energy management in microgrids with shared hydrogen storage. International Journal of Hydrogen Energy. 2025; 144: 1019–1027. doi: 10.1016/j.ijhydene.2025.01.413. DOI: https://doi.org/10.1016/j.ijhydene.2025.01.413

15. Xiong B, Zhang L, Hu Y, et al. Deep reinforcement learning for optimal microgrid energy management with renewable energy and electric vehicle integration. Applied Soft Computing. 2025: 176: 113180. doi: 10.1016/j.asoc.2025.113180. DOI: https://doi.org/10.1016/j.asoc.2025.113180

16. Ahmad T, Manzoor S, Zhang D. Forecasting high penetration of solar and wind power in the smart grid environment using robust ensemble learning approach for large-dimensional data. Sustainable Cities and Society. 2021; 75: 103269, doi: 10.1016/j.scs.2021.103269. DOI: https://doi.org/10.1016/j.scs.2021.103269

17. Moga ON, Florea A, Solea C, et al. Reinforcement learning-based energy management in community microgrids: A comparative study. Sustainability. 2025; 17(23): 10696. doi: 10.3390/su172310696 DOI: https://doi.org/10.3390/su172310696

18. Palma G, Guiducci L, Stentati M, et al. Reinforcement learning for energy community management: A european-scale study. Energies. 2024;17(5): 1249. doi: 10.3390/en17051249 DOI: https://doi.org/10.3390/en17051249

19. Jones G, Li X, Sun Y. Robust energy management policies for solar microgrids via reinforcement learning. Energies. 2024; 17(12): 2821. doi: 10.3390/en17122821 DOI: https://doi.org/10.3390/en17122821

20. An J, Hong T. Multi-objective optimization for optimal placement of shared battery energy storage systems in urban energy communities. Sustainable Cities and Society. 2025; 120: 106178. doi: 10.1016/j.scs.2025.106178 DOI: https://doi.org/10.1016/j.scs.2025.106178

21. Singh AR, Kumar RS, Bajaj M, et al. Blockchain-enabled multi-agent deep reinforcement learning framework for real-time demand response in renewable energy grids. Energy Strategy Reviews. 2025; 62: 101905. doi: 10.1016/j.esr.2025.101905 DOI: https://doi.org/10.1016/j.esr.2025.101905

22. Sezavar HR, Karimi H, Hasanzadeh S, et al. Enhancing overcurrent relay performance in power systems with distributed generation: an ai-based approach. Iranian Conference on Renewable Energies and Distributed Generation (ICREDG). IEEE, 2025: 1–8. doi: 10.1109/icredg66184.2025.10966087 DOI: https://doi.org/10.1109/ICREDG66184.2025.10966087

23. Mohammed MN, Aljibori HSS, Al-Tamimi A, et al. Toward sustainable smart cities in Bahrain: A groundbreaking approach to marine renewable energy harnessing sea tides and waves for a greener energy future. 2023 IEEE 8th International Conference on Engineering Technologies and Applied Sciences (ICETAS). 2023: 1–5. doi: 10.1109/icetas59148.2023.10346346 DOI: https://doi.org/10.1109/ICETAS59148.2023.10346346

24. Hamed Hamad A, Yousif Dawod A, Fakhrulddin Abdulqader M, et al. A secure sharing control framework supporting elastic mobile cloud computing. International Journal of Electrical and Computer Engineering (IJECE). 2023; 13(2): 2270. doi: 10.11591/ijece.v13i2.pp2270-2277 DOI: https://doi.org/10.11591/ijece.v13i2.pp2270-2277

25. Bhattarai S, Mohammed MKA, Madan J, et al. Comparative study of different perovskite active layers for attaining higher efficiency solar cells: Numerical simulation approach. Sustainability. 2023; 15(17): 12805. doi: 10.3390/su151712805 DOI: https://doi.org/10.3390/su151712805

26. Abdul Kareem B, L. Zubaidi S, Al-Ansari N, Raad Muhsen Y. Review of recent trends in the hybridization of preprocessing-based and parameter optimisation-based hybrid models to forecast univariate streamflow. Computer Modeling in Engineering & Sciences. 2024; 138(1): 1–41. doi: 10.32604/cmes.2023.027954 DOI: https://doi.org/10.32604/cmes.2023.027954

27. Chen Y, Zhao Z, Zhuo H, et al. Surface dependent effects of Mn-doping on the catalytic activity of α-Fe2O3 for ortho-para hydrogen conversion. Clean Energy Science and Technology. 2025; 3(4): 574. doi: 10.18686/cest574 DOI: https://doi.org/10.18686/cest574

.jpg)

.jpg)